However, if you want to learn Python or are new to the world of programming, it can be quite though getting started. There are so many things to learn: coding, object orienated programming, building desktop apps, creating web apps with Flask or Django, learning how to plot and even how to use Machine Learning or Artificial Intelligence. I would recommend taking a look at the BeautifulSoup python library. You must install the module on your computer, but it is probably the most widely used and most user-friendly web scraping library. Additionally it has a large amount of documentation as well,. This assumes that you have some basic knowledge of python and scrapy. If you are interested only in generating your dataset, skip this section and go to the sample crawl section on the GitHub repo. Gathering tweets URL by searching through hashtags. For searching for tweets we will be using the legacy Twitter website.

Scraping data from a website is one the most underestimated technical and business moves I can think of. Scraping data online is something every business owner can do to create a copy of a competitor’s database and analyze the data to achieve maximum profit. It can also be used to analyze a specific market and find potential costumers. The best thing is that it is all free of charge. It only needs some technical skills which many people have these days. In this post I am going to do a step by step tutorial on how to scrape data from a website an saving it to a database. I will be using BeatifulSoup, a python library designed to facilitate screen scraping. I will also use MySQL as my database software. You can probably use the same code with some minor changes in order to use your desired database software.

Please note that scraping data from the internet can be a violation of the terms of service for some websites. Please do appropriate research before scraping data from the web and/or publishing the gathered data.

Python Web Scraping Tutorial. Web scraping, also called web data mining or web harvesting, is the process of constructing an agent which can extract, parse, download and organize useful information from the web automatically. Python Pillow – Creating a Watermark In this article, we will see how the creation of a watermark is done using the Pillow library in Python. Pillow is a Python Image.

GitHup Page for This Project

I have also created a GitHub project for this blog post. I hope you will be able to use tutorial and code to customize it according to your need.

Requirements

- Basic knowledge of Python. (I will be using Python 3 but Python 2 would probably be sufficient too.)

- A machine with a database software installed. (I have MySQL installed on my machine)

- An active internet connection.

The following installation instructions are very basic. It is possible that the installation process for beautiful soup, Python etc. is a bit more complicated but since the installation is different on all different platforms and individual machines, it does not fit into the main object of this post, Scraping Data from a Website and saving it to a Database.

Installing Beautiful Soup

In order to install Beautiful Soup, you need to open terminal and execute one of the following commands according to your desired version of python. Please note that the following commands should probably be executed with “sudo” written before the actual line in order to give it administrative access.

For Python 2 (If pip did not work try it with pip2):

For Python 3:

If the above did not work, you can also use the following command.

For Python 2:

For Python 3:

If you were not able to install beautiful soup, just Google the term “How to install Beautiful Soup” and you will find plenty of tutorials.

Installing MySQLdb

In order to able to connect to your MySQL databases through Python, you will have to install MySQLdb library for your Python installation. Since Python 3 does not support MySQLdb at the time of this writing, you will need to use a different library. It is called mysqlclient which is basically a fork of MySQLdb with an added support for Python 3 and some other improvements. So using the library is basically identical to native MySQLdb for Python 2.

To install MySQLdb on Python 2, open terminal and execute the following command:

To install mysqlclient on Python 3, open terminal and execute the following command:

Installing requests

requests is a Python library which is used to load html data from a url. In order to install requests on your machine, follow the following instructions.

To install requests on Python 2, open terminal and execute the following command:

To install requests on Python 3, open terminal and execute the following command:

Now that we have everything installed and running, let’s get started.

Step by Step Guide on Scraping Data from a Single Web Page

I have created a page with some sample data which we will be scraping data from. Feel free to use this url and test your code. The page we will be scraping in the course of this article is https://howpcrules.com/sample-page-for-web-scraping/.

1. First of all we have to create a file called “scraping_single_web_page.py”.

2. Now, we will start by importing the libraries requests, MySQLdb and BeautifulSoup:

3. Let us create some variables where we will be saving our database connection data. To do so, add the below lines in your “” file.

4. We also need a variable where we will save the url to be scraped, into. After that, we will use the imported library “requests” to load the web page’s html plain text into the variable “plain_html_text”. In the next line, we will use BeautifulSoup to create a multidimensional array, “soup” which will be a big help to us in reading out the web page’s content efficiently.

Your whole code should be looking like this till now:

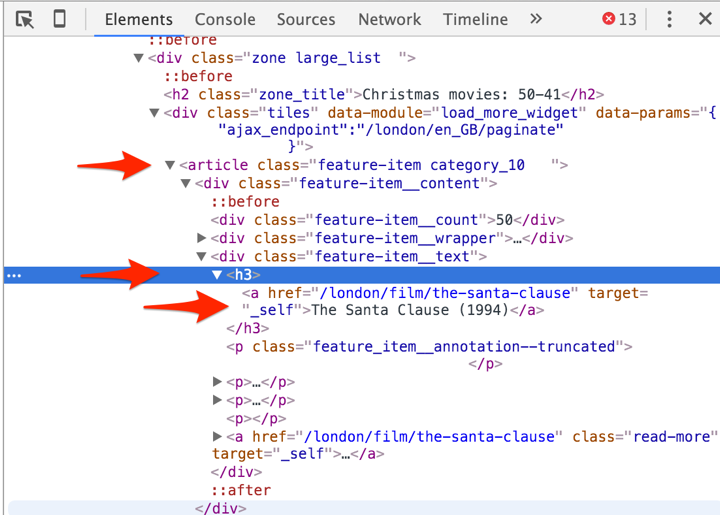

These few lines of code were enough to load the data from the web and parse it. Now we will start the task of finding the specific elements we are searching for. To do so we have to take a look at the page’s html and find the elements we are looking to save. All major web browsers offer the option to see the html plain text. If you were not able to see the html plain text on your browser, you can also add the following code the end of your “scraping_single_web_page.py” file to see the loaded html data in your terminal window.

To execute the code open terminal, navigate to the folder where you have your “scraping_single_web_page.py” file and execute your code with “python scraping_single_web_page.py” for Python 2 or “python3 scraping_single_web_page.py” respectively. Now, you will see the html data printing out in your terminal window.

5. Scroll down until you find my html comment “<!– Start Sample Data to be Scraped –>”. This is were the actual data we need starts. As you can see, the name of the class “Exercise: Data Structures and Algorithms” is written inside a <h3> tag. Since this is the only h3 tag in the whole page, we can use “soup.h3” to get this specific tag and its contents. We will now use the following command to get the whole tag where the name of the class is written in and save the content of the tag into the variable “name_of_class”. We will also use Python’s strip() function to remove all possible spaces to the left and right of the text. (Please note that the line print(soup.prettify()) was only there to print out the html data and can be deleted now.)

6. If you scroll down a little bit, you will see that the table with the basic information about the class is identified with summary=”Basic data for the event” inside its <table> tag. So we will save the parsed table in a variable called “basic_data_table”. If you take a closer look at the tags inside the table you will realize that the data itself regardless of its titles are saved inside <td> tags. These <td> tags have the following order from top to down:

According to the above, all text inside the <td> tags are relevant and need to be stored in appropriate variables. To do so we first have to parse all <td>s inside our table.

7. Now that we have all <td>s stored in the variable “basic_data_cells”, we have to go through the variable and save the data accordingly. (Please note that the index in arrays start from zero. So the numbers in the above picture will be shifted by one.)

8. Let’s continue with the course dates. Like the previous table, we have to parse the tables where the dates are written. The only difference is that for the dates, there is not only one table to be scraped but several ones. To do so we will use the following to scrape all tables into one variable:

9. We now have to go through all the tables and save the data accordingly. This means we have to create a for loop to iterate through the tables. In the tables, there is always one row (<tr> tag) as the header with <th> cells inside (No <td>s). After the header there can one to several rows with data that do interest us. So inside our for loop for the tables, we also have to iterate through the individual rows (<tr>s) where there is at least one cell (<td>) inside in order to exclude the header row. Then we only have to save the contents inside each cell into appropriate variables. Unzip zip file mac terminal.

This all is translated into code as follows:

Please note that the above code reads the data and overrides them in the same variables over and over again so we have to create a database connection and save the data in each iteration.

Saving Scraped Data into a Database

Now, we are all set to create our tables and save the scraped data. To do so please follow the steps below.

10. Open your MySQL software (PhpMyAdmin, Sequel Pro etc.) on your machine and create a database with the name “scraping_sample”. You also have to create a user with name “scraping_sample_user”. Do not forget to at least give write privileges to the database “scraping_sample” for the user “scraping_user”.

Web Scraping Tools

11. After you have created the database navigate to the “scraping_sample” database and execute the following command in your MySQL command line.

Now you have two tables with the classes in the first one and the corresponding events in the second one. We have also created a foreign key from the events table to the classes table. We also added a constraint to delete the events associated with a class if the class is removed (on delete cascade).

We can go back to our code “scraping_single_web_page.py” and start with the process of saving data to the database.

12. In your code, navigate to the end of step 7, the line “language = basic_data_cells[12].text.strip()” and add the following below that to be able to save the class data:

Here, we use the MySQLdb library to establish a connection to the MySQL server and insert the data into the table “classes”. After that, we execute a query to get back the id of the just inserted class the value is saved in the “class_id” variable. We will use this id to add its corresponding events into the events table.

13. We will now save each and every event into the database. To do so, navigate to the end of step 9, the line “max_participants = cells[9].text.strip()” and add the following below it. Please note that the following have to be exactly below that line and inside the last if statement.

here, we are using the variable “class_id” first mentioned in step 12 to add the events to the just added class.

Scraping Data from Multiple Similar Web Pages

This is the easiest part of all. The code will work just fine if you have different but similar web pages you would like to scrape data from. Just put the whole code excluding the steps 1-3 in a for loop where the “url_to_scrape” variable is dynamically generated. I have created a sample script where the same page is scraped a few times over to elaborate this process. To check out the script and the fully working example of the above, navigate to my “Python-Scraping-to-Database-Sample” GitHub page.

One of the awesome things about Python is how relatively simple it is to do pretty complex and impressive tasks. A great example of this is web scraping.

This is an article about web scraping with Python. In it we will look at the basics of web scraping using popular libraries such as requests and beautiful soup.

Topics covered:

- What is web scraping?

- What are

requestsandbeautiful soup? - Using CSS selectors to target data on a web-page

- Getting product data from a demo book site

- Storing scraped data in CSV and JSON formats

What is Web Scraping?

Some websites can contain a large amount of valuable data. Web scraping means extracting data from websites, usually in an automated fashion using a bot or web crawler. The kinds or data available are as wide ranging as the internet itself. Common tasks include

Python Simple Web Scraping

- scraping stock prices to inform investment decisions

- automatically downloading files hosted on websites

- scraping data about company contacts

- scraping data from a store locator to create a list of business locations

- scraping product data from sites like Amazon or eBay

- scraping sports stats for betting

- collecting data to generate leads

- collating data available from multiple sources

Legality of Web Scraping

There has been some confusion in the past about the legality of scraping data from public websites. This has been cleared up somewhat recently (I’m writing in July 2020) by a court case where the US Court of Appeals denied LinkedIn’s requests to prevent HiQ, an analytics company, from scraping its data.

The decision was a historic moment in the data privacy and data regulation era. It showed that any data that is publicly available and not copyrighted is potentially fair game for web crawlers.

However, proceed with caution. You should always honour the terms and conditions of a site that you wish to scrape data from as well as the contents of its robots.txt file. You also need to ensure that any data you scrape is used in a legal way. For example you should consider copyright issues and data protection laws such as GDPR. Also, be aware that the high court decision could be reversed and other laws may apply. This article is not intended to prvide legal advice, so please do you own research on this topic. One place to start is Quora. There are some good and detailed questions and answers there such as at this link

One way you can avoid any potential legal snags while learning how to use Python to scrape websites for data is to use sites which either welcome or tolerate your activity. One great place to start is to scrape – a web scraping sandbox which we will use in this article.

An example of Web Scraping in Python

You will need to install two common scraping libraries to use the following code. This can be done using

pip install requests

and

pip install beautifulsoup4

in a command prompt. For details in how to install packages in Python, check out Installing Python Packages with Pip.

The requests library handles connecting to and fetching data from your target web-page, while beautifulsoup enables you to parse and extract the parts of that data you are interested in.

Let’s look at an example:

So how does the code work?

In order to be able to do web scraping with Python, you will need a basic understanding of HTML and CSS. This is so you understand the territory you are working in. You don’t need to be an expert but you do need to know how to navigate the elements on a web-page using an inspector such as chrome dev tools. If you don’t have this basic knowledge, you can go off and get it (w3schools is a great place to start), or if you are feeling brave, just try and follow along and pick up what you need as you go along.

To see what is happening in the code above, navigate to http://books.toscrape.com/. Place your cursor over a book price, right-click your mouse and select “inspect” (that’s the option on Chrome – it may be something slightly different like “inspect element” in other browsers. When you do this, a new area will appear showing you the HTML which created the page. You should take particular note of the “class” attributes of the elements you wish to target.

In our code we have

This uses the class attribute and returns a list of elements with the class product_pod.

Then, for each of these elements we have:

Python Web Scraping Pdf

The first line is fairly straightforward and just selects the text of the h3 element for the current product. The next line does lots of things, and could be split into separate lines. Basically, it finds the p tag with class price_color within the div tag with class product_price, extracts the text, strips out the pound sign and finally converts to a float. This last step is not strictly necessary as we will be storing our data in text format, but I’ve included it in case you need an actual numeric data type in your own projects.

Storing Scraped Data in CSV Format

csv (comma-separated values) is a very common and useful file format for storing data. It is lightweight and does not require a database.

Add this code above the if __name__ '__main__': line

and just before the line print('### RESULTS ###'), add this:

store_as_csv(data, headings=['title', 'price'])

When you run the code now, a file will be created containing your book data in csv format. Pretty neat huh?

Storing Scraped Data in JSON Format

Another very common format for storing data is JSON (JavaScript Object Notation), which is basically a collection of lists and dictionaries (called arrays and objects in JavaScript).

Add this extra code above if __name__ ..:

and store_as_json(data) above the print('### Results ###') Unzip files on macbook pro. line.

So there you have it – you now know how to scrape data from a web-page, and it didn’t take many lines of Python code to achieve!

Full Code Listing for Python Web Scraping Example

Here’s the full listing of our program for your convenience.

One final note. We have used requests and beautifulsoup for our scraping, and a lot of the existing code on the internet in articles and repositories uses those libraries. However, there is a newer library which performs the task of both of these put together, and has some additional functionality which you may find useful later on. This newer library is requests-HTML and is well worth looking at once you have got a basic understanding of what you are trying to achieve with web scraping. Another library which is often used for more advanced projects spanning multiple pages is scrapy, but that is a more complex beast altogether, for a later article.

Working through the contents of this article will give you a firm grounding in the basics of web scraping in Python. I hope you find it helpful

Happy computing.